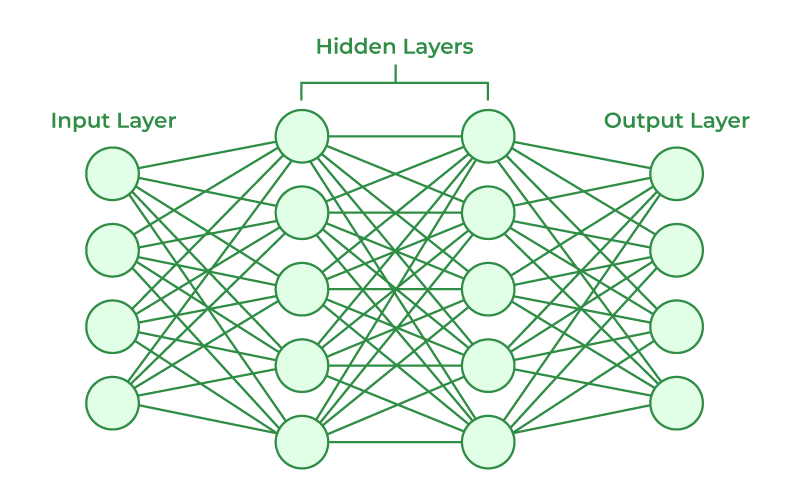

We are surrounded by digital technology in our modern world. From phones, to computers, to even the woven cloth we wear as cloths, most things can be simplified down to discrete symbols/building blocks (like 1s and 0s in code or “over/under” for woven things). However, one new digital technology is taking our society by storm: AI. Some background on the nature and mechanisms of artificial intelligence can be found on some of the other posts in this blog. In this post, however, I will be discussing people’s preconceived ideas about AI in the STEM field.

As people know, AI is becoming more and more capable of replacing people in their jobs such as manual laborers, clerks, factory workers, and various writing positions, just to name a few. It wouldn’t be an unreasonable assumption that it will take over EVERY job we have and to be scared. However, this isn’t really the case (yet…). One good example of jobs that can’t be replaced by AI yet are those relating to the field of STEM. In short, AI can’t replace more complex jobs like those, but instead help accelerate the speed of work.

In my case, I am a biochemistry major. One line of work that correlated with my major is working with genes and DNA. In the human genome, there are millions of genes that interact with one other is unimaginably complex ways to help our bodies function, make us look a certain way, and make us who we are. Trying to manual determine what each of these individual genes do is quite literally impossible when thinking about it from a time-conservative perspective. However, with AI, this process can be exponentially sped up, with AI being able to retrieve data about genes in seconds, linking genes functions autonomously from a library of genes, and simulating what they could theoretically do without have to carry out long experiments. As of right now though, AI cannot take into account for genes not in its database or what experiments to do on new genes, so the ‘field of exploration’ mainly still is in the hands of humans.

One thing to take away from this is that you should not be scared of AI. Instead, you should embrace it and learn how to use it as its here to stay. It will only do damage to your position if you let it; you must harness it to accelerate your progress and expand your capabilities beyond what your time frame allows you to.